Published on the 09/05/2019 | Written by Heather Wright

Use of ‘dark patterns’ under new scrutiny…

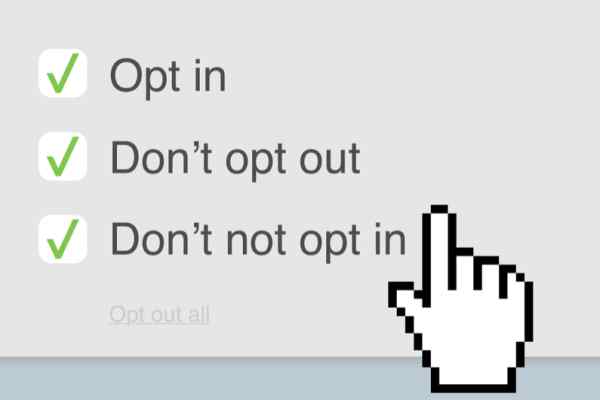

Dark patterns might sound like a sinister master plan from the likes of Dr Evil, but we all know it’s happening. In fact, many of us will have experienced it: The signup process that implies the only options are via using your Facebook or Google account, the accidental signup for something you didn’t want…

Dark patterns, a term coined by user interface expert Harry Brignull who created the Dark Patterns website, refers to deliberately deceptive methods used by companies to get users to hand over personal data, or take actions they might not otherwise agree to. (For plenty of examples, head over to Dark Pattern’s Hall of Shame.

Users searching for the privacy friendly option, if it exists at all, often must click through a much longer process.

For years tech companies, including Facebook and Google, have been using the user interface tricks, and the list of tricks deployed is endless. The ‘roach motel’ where you get into a situation, such as a premium subscription, easily, but then find it hard to get out; the Disguised Ads; the Friend Spam where you’re asked for email or social media permissions under a pretence such as finding friends only to find all your contacts are spammed with a message purportedly from you; and Forced Continuity, where free trials come to an end and, without warning, your credit card is charged, with cancellation sometimes made difficult are all part of the dark patterns trend.

Just a year ago, Facebook CEO Mark Zuckerberg was testifying in front of US Congress about his company’s practices around user privacy. (Facebook’s use of dark patterns earns it a category in the types of dark patterns: Privacy Zuckering where users are tricked into sharing more information than they intend do.)

Now, the methods have sparked action from US Congress, with two senators – one democrat and one republican – introducing legislation to ban dark patterns.

The bipartisan Detour (Deceptive Experiences to Online Users Reduction) Act is seeking to make it unlawful to manipulate user interfaces to trick users in an effort to obtain consent or user data, or to use online consumers for behavioural or psychological experiments without their express consent.

The bill also aims to block the websites or online services using interfaces to ‘cultivate compulsive usage’ – such as auto-playing the next video – in those under the age of 13.

Speaking at a Senate Commerce data privacy hearing earlier this month, bill co-author Senator Deb Fischer said dark patterns “Users see a false choice or a simple escape route through the ‘I agree’ or ‘OK’ button. This can hide what the action actually does, which is accessing your contacts, your messages, web activity or location,” Fischer says.

“Users searching for the privacy friendly option, if it exists at all, often must click through a much longer process and many screens.”

Dark patterns – and the use of basic psychology (such as swapping colours so red signifies you agree to something, or making the less favoured option for the company more difficult to see – pale grey being a favourite – or smaller font size), aren’t new.

Brignull termed the coin back in 2010 and the mining and use of consumers’ data has continued to rise dramatically since then.

But with laws around privacy being tightened, the techniques are facing increasing scrutiny. Fischer cited companies finding ‘workarounds’ being deployed to defeat the EU’s GDPR privacy requirements though creating user confusion.

The US senators aren’t the only ones gearing up to increase the pressure on companies deploying dark patterns. Last year a Norwegian Consumer Council study slammed Facebook and Google for the techniques they use to steer consumers into sharing ‘vast amounts of information through cunning design, privacy invasive defaults and ‘take it or leave it’ choices’.

But in a world driven by data, a move away from dark patterns might not be an easy ask. It will require services to move away from business models which rely heavily on the data they glean from users and which provide ‘free’ services in return.

The Detour Act, if passed, could also dramatically change testing for platforms, which rely heavily on A/B testing – something that would be outlawed unless clearly disclosed to users.

For now however, it remains a case of user beware, with being wise to dark patterns the first line of defence.