Published on the 03/06/2022 | Written by Heather Wright

Is there any chance of redemption?…

Last week’s news that facial recognition company Clearview AI has copped a fine of more than £7.5 million by the UK’s privacy watchdog continues a long sting of high profile action against the company. But is there room for technology like Clearview AI?

The Information Commissioners Office (ICO) slapped Clearview with the fine – the third largest GDPR-era fine to date but well under the £17 million it had initially considered in its provisional plans. It also issued an enforcement notice that the company stop collecting and using the personal data of UK citizens, and delete the data it has from its systems.

The ICO found that Clearview AI had been in breach of UK data protection laws.

The action followed a joint investigation with the Office of the Australian Information Commissioner (OIAC). Australia took action last November, ordering the company to delete all images of people in Australia within 90 days and to stop collecting the data.

Other countries including Canada, Italy and France have also done similar, while in the US – which doesn’t have a federal data protection law – the company has agreed to restrictions on how businesses can use the database of images as part of a settlement in a case brought against it by the American Civil Liberties Union. The agreement means Clearview won’t sell access to the database – or provide it for free – to most private businesses and individuals. In the state of Illinois, which has had a Biometric Information Privacy Act since 2008, the company can’t sell access to its database to anyone, including police, for five years.

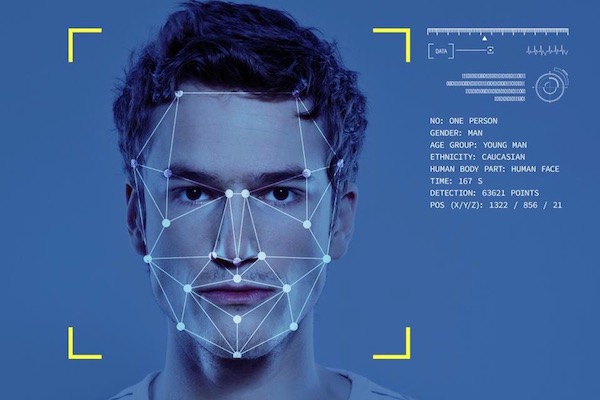

At issue is the right to privacy and increasing unease around increased monitoring, and Clearview AI’s massive database of 20 billion photos of citizens around the world, and more specifically the way that database was obtained – without the knowledge or consent of everyone whose images are included. Instead, the data scraped from the internet, along with information about who they are. The photos don’t just underpin the company’s facial recognition offering, they are used as training data, creating one of the most accurate facial recognition tools available according to NIST tests.

Combined with Clearview AI’s facial recognition technology, or indeed other facial recognition, the database can – and is – being used for facial recognition.

In Australia, it was used by some Australian police forces in trials between October 2019 and March 2020 and in New Zealand police were busted for conducting hundreds of searches using Clearview AI, without any consultation with the Privacy Commissioner.

Clearview has always maintained that the data it uses is publicly available anyway, and that the combination of the image database and facial recognition technology is a powerful ally in crime prevention.

Founder and CEO Hoan Ton-That also says there’s a misconception that Clearview AI is in real time – enabling companies to pull data in real-time about people captured on CCTV footage entering a premise for example.

“Clearview AI has been instrumental in helping investigators solve thousands of cases including crimes against children, homicides, financial fraud, drug trafficking, sex offenders, anti-human trafficking operations as well as victim and missing person identifications,” the company says.

As well as the issue of privacy, Clearview AI, and indeed facial recognition, has raised the high potential of misuse – from police officers using it to stalk potential romantic partners (or enemies), to foreign government’s who might use it to perpetuate human rights abuses – a concern also being cited against police departments around the world too.

Bias too, remains a key concern, with facial recognition technology reportedly disproportionately identifying people of colour – potentially leading to wrong arrests.

But is there an argument for the use of Clearview AI and other potential offerings? Some argue yes, in some cases.

Case in point: In 2018, police in New Delhi used facial recognition to scan the images of 45,000 living in children’s homes and orphanages. In the space of just four days, they identified 2,930 children who had been registered as missing.

There’s also an argument for the use of facial recognition technology to identify victims in online sexual abuse images – not only potentially identifying the victims but also sparing law enforcement from the hours of viewing such images themselves for manual identification.

In Ukraine, Clearview AI is being used to identify Russian assailants and identify the dead.

But the Clearview AI situation highlights the struggle to regulate facial recognition and AI use across borders, and the lack of regulations in place to provide guardrails and establish when and where, if at all, the technology can be used.

Without specific laws and a legal framework to work with, regulators are instead calling on legislation like the GDPR and Data Protection Act, or privacy acts – and in doing so enabling companies to exploit the ambiguity.

Regulations to guide deployment of the technology could provide a guardrail around use.

And without international agreements, there’s also the question of enforcement for companies caught out like Clearview. The company has already said it doesn’t operate in the UK, so isn’t bound by their data laws and it’s expected to appeal the fine.

John Edwards, UK Information Commissioner – and former NZ privacy commissioner – says international enforcement is needed.

“This international cooperation is essential to protect people’s privacy rights in 2022. That means working with regulators in other countries, as we did in this case with our Australian colleagues.”

He’s meeting with regulators in Europe this week to discuss further collaboration on the topic.

The EU itself is working an Artificial Intelligence Act which could ban real time facial recognition. The act has been held up as having potential to become a global standard, but it does have limitations – and wouldn’t resolve the Clearview situation. Under the AI Act facial recognition is banned for police unless images are captured with a delay or the technology is being used to find missing children.

Just days after the ICO fine was announced, Clearview launched its first ‘consent based product for commercial uses’.

The new offering harnesses just the facial recognition technology, sans the controversial database of facial images. There is however, a question mark over the use of AI trained using ill-gotten images.

While the new offering will require consent of those whose images are used, Clearview AI remains unrepentant. Its 20 billion strong database, which is still touted heavily on the company’s website, continues to grow. It was just three billion back in 2020.